Ekta Sood

Institute for Visualisation and Interactive Systems, University of Stuttgart

+49 711 685 60141 Pfaffenwaldring 5a, 70569 Stuttgart, Germany University of Stuttgart, SimTech Building, Room 01.033 Google Scholar

Biography

Ekta Sood was a PhD student in the Collaborative Artificial Intelligence group from 2019 to 2024. She holds a Bachelor's degree in Cognitive Science from the University of California, Santa Cruz, and a Master's degree in Computational Linguistics from the University of Stuttgart. Her interests are in Multimodal modeling, Attention and Memory in Deep Learning, Affective Computing and Emotion Analysis, as well as Behavioral Neuroscience. Ekta was awarded a Google scholarship for the 2019/2020 year and did an internship at Meta (USA) in 2022-2023 as a Research Scientist Intern.

Teaching

- 2022

- Machine Perception and Learning (Tutor)Master

- 2020

- Machine Learning and Computer Vision for HCI (Fachpraktikum)Master

- 2019

- Machine Learning and Computer Vision for HCI (Fachpraktikum)Master

- Intelligent User Interfaces (Hauptseminar)Master

Supervision

- 2024

- Analyzing Neural Attention in Chart Question Answering (M.Sc.) Yingpeng Ma§§

- 2023

- Offline Resumption Detection in Reading (B.Sc.) Abel Gitzing+

- 2022

- Video-based Representation Learning for Emotion Recognition (M.Sc.) Shangrui Nie**

- Interpreting Neural Attention in NLP with Human Visual Attention (Research Project) Shangrui Nie**

- Visual Question Answering through Attention Modelling with Curiosity-driven Reinforcement Learning (M.Sc.) Stoyan Dimitrov*

- Get Into Their Heads: Disentangling Relevancy and Attention in Multimodal Transformers (M.Sc.) Fabian Koegel

- Investigating Multi-modal Human-like Attention Integration for Visual Question Answering (M.Sc.) Ann-Sophia Mueller

- 2021

- Calibration of an Eyetracker While Reading (Bachelor Research Project) Hallach et al.

- Gaze to Text and Gaze to Image Generation (M.Sc.) Keerthana Jaganathan

- Gaze Dataset Collection Study for Visually Grounded Language Modeling Tasks (InfoTech Project) Fabian Koegel

- Image Reconstruction from Human Brain Activity by Variational Autoencoder and Adversarial Learning (M.Sc.) Mariia Podguzova**

- 2020

- Fast-Learning System in Multi-Modal Context (M.Sc.) Adnen Abdessaied

- Incorporating Gaze and Speech Signals in Multi-modal Emotion Recognition (B.Sc.) Ahmed Abdou

- 2019

- Analyzing Human Gaze Information for Mental Image Synthetization (M.Sc.) Florian Strohm

**Co-supervised with Florian Strohm

§§Co-supervised with Yao Wang and Susanne Hindennach

+Co-supervised with Francesca Zermiani and Chuhan Jiao

Publications

-

InteRead: An Eye Tracking Dataset of Interrupted Reading

Proc. 31st Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING), pp. 9154–9169, 2024.

-

Impact of Privacy Protection Methods of Lifelogs on Remembered Memories

Proc. ACM SIGCHI Conference on Human Factors in Computing Systems (CHI), pp. 1–10, 2023.

-

Multimodal Integration of Human-Like Attention in Visual Question Answering

Proc. Workshop on Gaze Estimation and Prediction in the Wild (GAZE), CVPRW, pp. 2647–2657, 2023.

-

Improving Neural Saliency Prediction with a Cognitive Model of Human Visual Attention

Proc. Annual Meeting of the Cognitive Science Society (CogSci), pp. 3639–3646, 2023.

-

Facial Composite Generation with Iterative Human Feedback

Proc. The 1st Gaze Meets ML workshop, PMLR, pp. 165–183, 2023.

-

Video Language Co-Attention with Multimodal Fast-Learning Feature Fusion for VideoQA

Proc. of the 7th Workshop on Representation Learning for NLP (Repl4NLP), pp. 1–12, 2022.

-

Gaze-enhanced Crossmodal Embeddings for Emotion Recognition

Proc. International Symposium on Eye Tracking Research and Applications (ETRA), pp. 1–18, 2022.

-

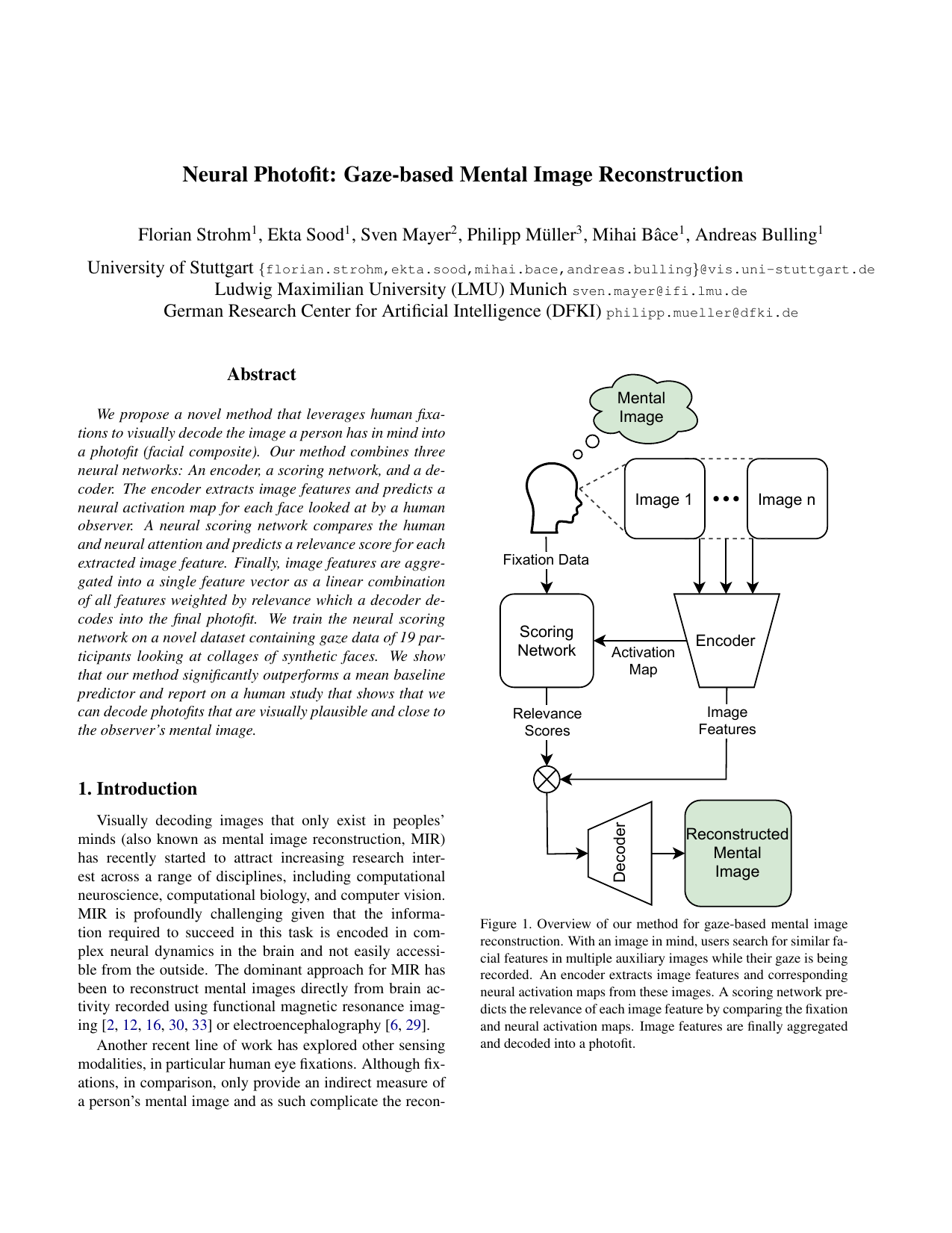

Neural Photofit: Gaze-based Mental Image Reconstruction

Proc. IEEE International Conference on Computer Vision (ICCV), pp. 245-254, 2021.

-

VQA-MHUG: A gaze dataset to study multimodal neural attention in VQA

Proc. ACL SIGNLL Conference on Computational Natural Language Learning (CoNLL), pp. 27–43, 2021.

-

Anticipating Averted Gaze in Dyadic Interactions

Proc. ACM International Symposium on Eye Tracking Research and Applications (ETRA), pp. 1-10, 2020.

-

Interpreting Attention Models with Human Visual Attention in Machine Reading Comprehension

Proc. ACL SIGNLL Conference on Computational Natural Language Learning (CoNLL), pp. 12-25, 2020.

-

Improving Natural Language Processing Tasks with Human Gaze-Guided Neural Attention

Advances in Neural Information Processing Systems (NeurIPS), pp. 1–15, 2020.