Multimodal Personality Synthesis on 3D Avatars

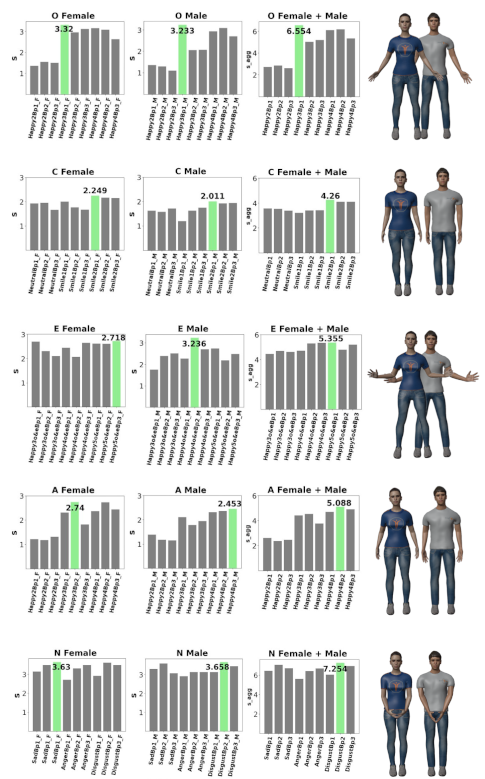

Description: 3D avatars are human-like intelligent user interfaces. This project aims at synthesising personality traits onto 3D avatars through (non-)verbal features, e.g., facial expression, body posture and movement, gaze, interaction, body shape, dress style and the way of speaking.

Steps: (1) Find features that are related to personality from existing literature. (2) Adapt such features to 3D avatars. (3) Conduct a study where participants report their perceptions of the created avatars. (4) Analyse the study results and get insights about personality synthesis.

Supervisor: Guanhua Zhang

Distribution: 20% Literature, 40% Data preparation, 20% User study, 20% Analysis and discussion

Requirements: Strong programming skills, knowledge about statistical analysis

Literature: Xueni Pan et al. 2015.Virtual character personality influences participant attitudes and behavior–an interview with a virtual human character about her social anxiety. Frontiers in Robotics and AI 2, no 1.

Melodie Vidal et al. 2015. The royal corgi: Exploring social gaze interaction for immersive gameplay. Proceedings of the 33rd annual ACM Conference on Human Factors in Computing Systems (CHI).

Yining Lang et al. 2019. 3d face synthesis driven by personality impression. Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, no. 1.

Sinan Sonlu, Uğur Güdükbay, and Funda Durupinar. 2021. A conversational agent framework with multi-modal personality expression. ACM Transactions on Graphics (TOG), vol. 40, no. 1.